You look at its borders and its surroundings to see if it aligns well. Imagine that you want to precisely straighten a photograph on your wall. We'll discuss some best practices for this further below when talking about design recommendations. Try not to overwhelm the user with immediate pop-out effects or hover sounds. This issue should also be considered when designing visual and auditory feedback when looking at a target. This solution also allows for a mode in which the user can freely look around without being overwhelmed by involuntarily triggering something. We recommend combining eye-gaze with a voice command, hand gesture, button click or extended dwell to trigger the selection of a target (for more information, see eye-gaze and commit). Reacting to every look you make and accidentally issuing actions, because you looked at something for too long, would result in an unsatisfying experience. The moment you open your eye lids, your eyes start fixating on things in the environment.

GAZE EYE TRACKING HOW TO

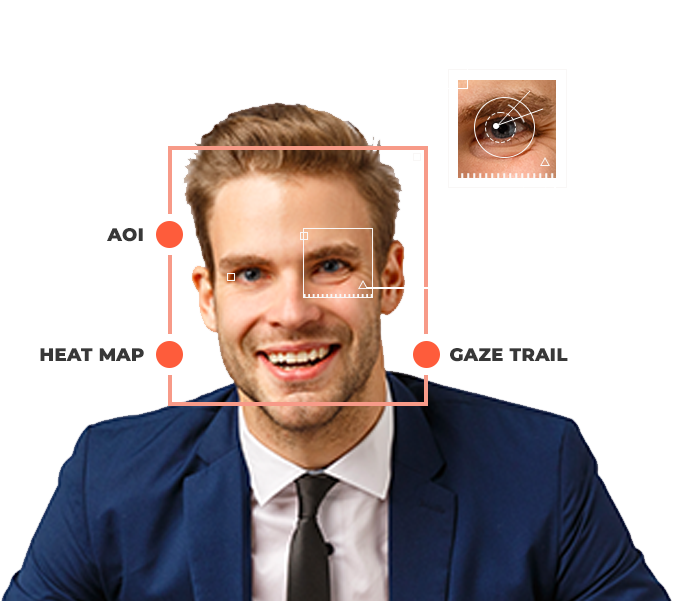

The following list discusses some challenges to consider and how to address them when working with eye-gaze input:

While eye-gaze can be used to create satisfying user experiences, which make you feel like a superhero, it's also important to know what it isn't good at to appropriately account for this.

GAZE EYE TRACKING MANUAL

This is powerful when combined with other inputs such as voice and manual input to confirm the user's intent. In a nutshell, using eye-gaze as an input offers a fast and effortless contextual input signal. This can help in various application areas ranging from more effectively evaluating different designs to aiding in smarter user interfaces and enhanced social cues for remote communication. Often described by users as "mind reading", information about a user's eye movements lets the system know which target the user plans to engage.Įye-gaze can provide a powerful supporting input for hand and voice input building on years of experience from users based on their hand-eye coordination.Īnother important benefit is the possibility to infer what a user is paying attention to. The eye muscle is the fastest reacting muscle in the human body.īarely any physical movements are necessary. In this section, we summarize the key advantages and challenges to consider when designing your application. Eye-gaze input design guidelinesīuilding an interaction that takes advantage of fast-moving eye targeting can be challenging.

GAZE EYE TRACKING FULL

Download and enjoy the full experience here. This video was taken from the "Designing Holograms" HoloLens 2 app. When you've finished, continue on for a more detailed dive into specific topics. If you'd like to see Head and Eye Tracking design concepts in action, check out our Designing Holograms - Head Tracking and Eye Tracking video demo below. Head and eye tracking design concepts demo Based on these, we provide several design recommendations to help you create satisfying eye-gaze-supported user interfaces. You'll learn about key advantages and also unique challenges that come with eye-gaze input. On this page, we discuss design considerations for integrating eye-gaze input to interact with your holographic applications. While eye-gaze input is only used subtly in our Holographic Shell experience (the user interface that you see when you start your HoloLens 2), several apps, such as the "HoloLens Playground", showcase great examples on how eye-gaze input can add to the magic of your holographic experience. Eye-gaze input is still a new type of user input and there's a lot to learn. On our Eye tracking on HoloLens 2 page, we mentioned the need for each user to go through a Calibration, provided some developer guidance, and highlighted use cases for eye tracking. One of our exciting new capabilities on HoloLens 2 is eye tracking.